Bolzmann Machine

Boltzmann Machine

- Stochastic recurrent neural network

- Introduced by Hinton and Sejnowski

- Learn internal representations

- Problem: unconstrained connectivity

Representation

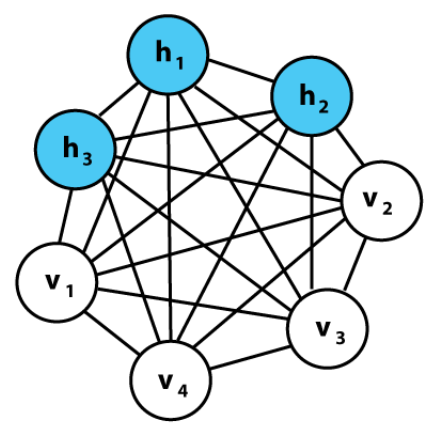

Model can be represented by Graph:

Undirected graph

Nodes: States

States

Types:

- Visible states

- Represent observed data

- Can be input/output data

- Hidden states

- Latent variable we want to learn

- Bias states

- Always one to encode the bias

Binary states

- unit value $\in \{0, 1\}$

Stochastic

Decision of whether state is active or not is stochastically

Depend on the input $$ z_{i}=b_{i}+\sum_{j} s_{j} w_{i j} $$

- $b_i$: Bias

- $S_j$: State $j$

- $w_{ij}$: Weight between state $j$ and state $i$

$$ p\left(s_{i}=1\right)=\frac{1}{1+e^{-z_{i}}} $$

Connections

Graph can be fully connected (no restrictions)

Unidircted: $$ w_{ij} = w_{ji} $$

No self connections: $$ w_{ii} = 0 $$

Energy

Energy of the network $$ \begin{aligned} E &= -S^TWS - b^TS \\ &= -\sum_{i<j} w_{i j} S_{i} S_{j}-\sum_{i} b_{i} s_{i} \end{aligned} $$ Probability of input vector $v$ $$ p(v)= \frac{e^{-E(v)}}{\displaystyle \sum_{u} e^{-E(u)}} $$ Updating the nodes

decrease the Energy of the network in average

reach Local Minimum (Equilibrium)

Stochastic process will avoid local minima $$ \begin{array}{c} p\left(s_{i}=1\right)=\frac{1}{1+e^{-z_{i}}} \\ z_{i}=\Delta E_{i}=E_{i=0}-E_{i=1} \end{array} $$

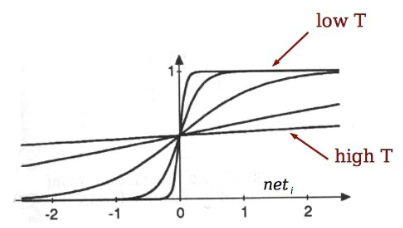

Simulated Annealing

Use Temperature to allow for more changes in the beginning

Start with high temperature

“anneal” by slowing lowering T

Can escape from local minima 👏

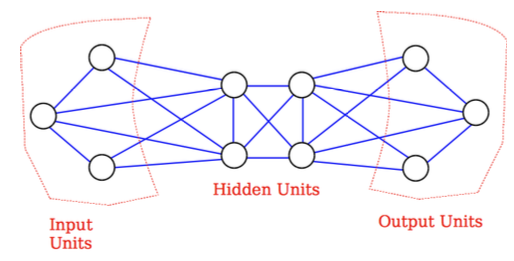

Search Problem

Input is set and fixed (clamped)

Annealing is done

Answer is presented at the output

Hidden units add extra representational power

Learning problem

Situations

- Present data vectors to the network

Problem

- Learn weights that generate these data with high probability

Approach

- Perform small updates on the weights

- Each time perform search problem

Pros & Cons

✅ Pros

- Boltzmann machine with enough hidden units can compute any function

⛔️ Cons

- Training is very slow and computational expensive 😢

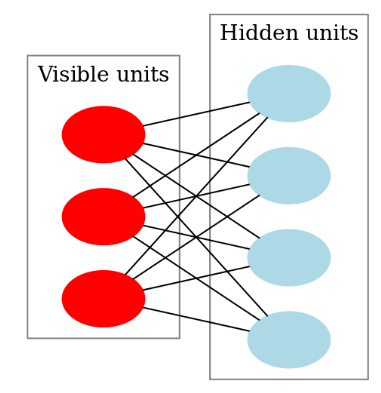

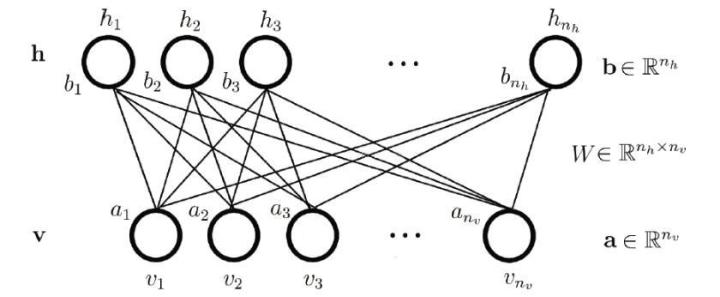

Restricted Boltzmann Machine

Boltzmann machine with restriction

Graph must be bipartite

Set of visible units

Set of hidden units

✅ Advantage

- No connection between hidden units

- Efficient training

Energy

Energy: $$ \begin{aligned} E(v, h) &= -a^{\mathrm{T}} v-b^{\mathrm{T}} h-v^{\mathrm{T}} W h \\ &= -\sum_{i} a_{i} v_{i}-\sum_{j} b_{j} h_{j}-\sum_{i} \sum_{j} v_{i} w_{i j} h_{j} \end{aligned} $$ Probability of hidden unit: $$ p\left(h_{j}=1 \mid V\right)=\sigma\left(b_{j}+\sum_{i=1}^{m} W_{i j} v_{i}\right) $$ Probability of input vector: $$ p\left(v_{i} \mid H\right)=\sigma\left(a_{i}+\sum_{j=1}^{F} W_{i j} h_{j}\right) $$

$$ \sigma(x)=\frac{1}{1+e^{-x}} $$

Free Energy: $$ \begin{array}{l} e^{-F(V)}=\sum_{j=1}^{F} e^{-E(v, h)} \\ F(v)=-\sum_{i=1}^{m} v_{i} a_{i}-\sum_{j=1}^{F} \log \left(1+e^{z_{j}}\right) \\ z_{j}=b_{j}+\sum_{i=1}^{m} W_{i j} v_{i} \end{array} $$